ByKevin Deutsch

[email protected]

A controversial app that scrapes billons of social media photos to identify criminal suspects has suffered a major data breach, with hackers retrieving a list of every client using the software, its makers said Wednesday.

The facial recognition app, created by Clearview AI, has compiled more than 3 billion facial images from the Internet for use by private clients and government agencies, spurring dire warnings from civil rights and privacy advocates.

The NYPD’s Facial Identification Section has said it tried out the app, but no longer uses it. A number of other law enforcement agencies, as well as banking companies, have acknowledged using the technology.

As the country’s largest police force, the NYPD has been an early adopter of facial recognition software, the capabilities of which have expanded dramatically during the past year.

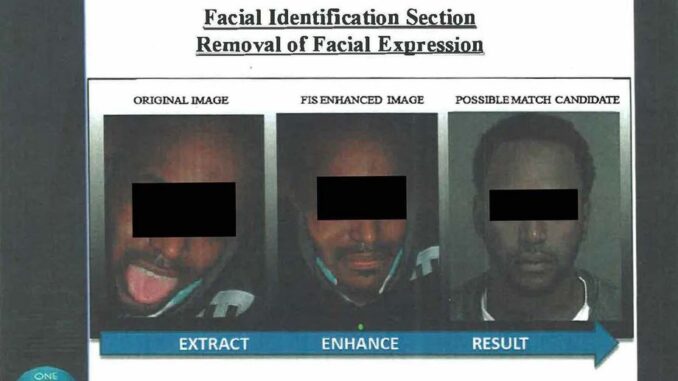

The department has even launched a standalone Facial Identification Section, tasked with wielding the technology in pursuit of lawbreakers.

Civil libertarians have blasted the city for revealing little about how its agencies use facial recognition in the five boroughs. And alleged civil rights and privacy abuses have already been uncovered.

A new report from Georgetown Law’s Center on Privacy and Technology, for instance, revealed the NYPD’s alteration of suspect images, while other reports highlighted the department’s alleged improper storage of minors’ photos.

State Senator Brad Hoylman (D/WF-Manhattan), who has sponsored legislation that would institute a moratorium on facial recognition use by law enforcement in New York, said the data breach at Clearview AI showed the danger of facial recognition tech.

“They couldn’t protect their own customer information from hackers – we certainly can’t trust them to protect billions of photos they store of you and I,” Hoylman said. “Right now, facial recognition technology is like the wild west: there’s no regulation and no protections for our privacy and civil liberties.”

According to its developers, the Clearview AI app allows law enforcement and other approved users to match photos from the app’s database to potential suspects in investigations.

The company keeps scraped photos even after they are deleted from the Internet, or their user settings set to private, privacy advocates said.

On its website, Clearview says its “image search technology has been independently tested for accuracy and evaluated for legal compliance by nationally recognized authorities” and “has achieved the highest standards of performance on every level.”

The company said it corrected the software issue that allowed the breach.